AI users report pain points and challenges; tech advisors better know them

AI technologies might force a rethinking of some longstanding assumptions about technology maturation and adoption. Even though generative AI technologies have largely started their slide toward the “trough of disillusionment” on the famed Gartner hype cycle, while agentic AI likewise has pushed past its “peak of inflated expectations,” there doesn’t appear to be the expected amount of disillusionment around AI adoption. Indeed, despite research and reports of bubbles, gaps and pilots failing to takeoff toward implementations, surveys suggest C-suites, board rooms and IT decision-makers are full steam ahead when it comes to AI investments.

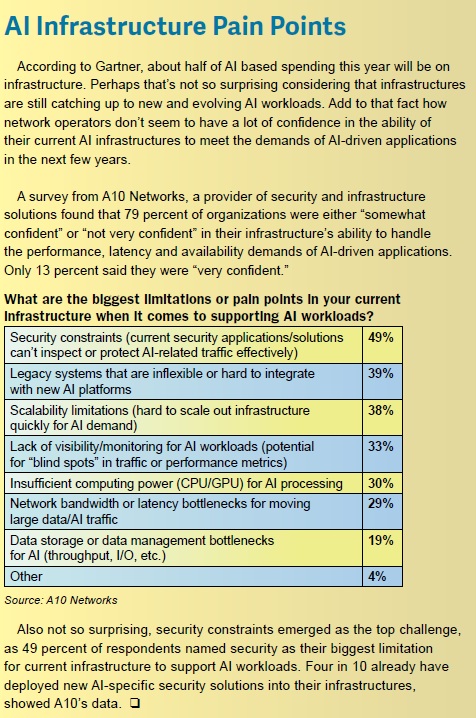

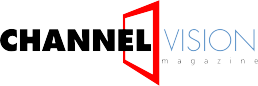

According to a recently released report from Accenture, for example, 86 percent of C-suite leaders plan to increase AI investment in 2026. Nearly the same percentage (78 percent) said they now see AI as more beneficial to revenue growth than cost reduction, up from 65 percent who said the same in June 2024. Even if the proverbial “AI bubble were to burst,” a significant percentage of executives said they would increase their spending on AI. “AI remains the centerpiece of 2026 investment strategies,” said Accenture analysts.

In other words, business leadership is not dissuaded by the reality that fewer employees said they regularly work with AI agents, according to Accenture findings, down to 32 percent in January 2026 compared to 42 percent in 2025. Similarly, they are not disillusioned by the increasing number of employees who said they’ve “used or tested AI agents but don’t work with them regularly,” from 36 percent in January of last year to 43 percent this recent January.

“The positivity reverberating across the C-suite does not align with what their workforce is experiencing, even though talent is the primary accelerator of AI scale,” Accenture researchers noted.

Providers and distributors of AI-oriented technologies and solutions, on the other hand, can’t afford to be quite as cavalier about employees’ AI misgivings. While they may seem to have come rather quickly, we are seeing clear pain points among users of AI as well as barriers to achieving intended outcomes. And providers and partners who are either unaware of or avoid acknowledgement of known pain points will have a tough time establishing themselves as trusted advisors.

And make mistake, for employees, “the underlying capabilities of AI at work remain fragile as 54 percent cite low-quality or misleading AI outputs leading to wasted time and productivity,” showed Accenture’s data.

Similarly, new research from Workday shows that while many organizations are realizing gains from AI, a substantial share of that value is being quietly lost to rework and low-quality output. Roughly 37 percent of the time saved through AI, for example, is being offset by rework, according to the SaaS provider of human capital management and financial management platforms. Only 14 percent of employees consistently achieve net-positive outcomes from AI use, showed the Workday data.

Accenture, for its part, found that 13 percent of respondent say they “frequently encounter” misleading and low-quality outputs from their AI use.

“Employees report spending significant time correcting, clarifying or rewriting low-quality AI-generated content – essentially creating an AI tax on productivity,” said the Workplace study. “For every 10 hours of efficiency gained through AI, nearly four hours are lost to fixing its output.”

Interestingly enough, employers aged 25- to 34-years-old, while often assumed to adapt most easily to new technologies, account for nearly half (46 percent) of employees experiencing the highest levels of verification and correction of AI output, hence emerging as a consistent hot spot for AI-related rework.

“These employees tend to use AI frequently and with confidence, but they also report spending significantly more time auditing results – adding a hidden layer of work rather than eliminating it,” said Workplace researchers. “In practice, AI accelerates output, while responsibility for ensuring quality remains squarely with the employee.”

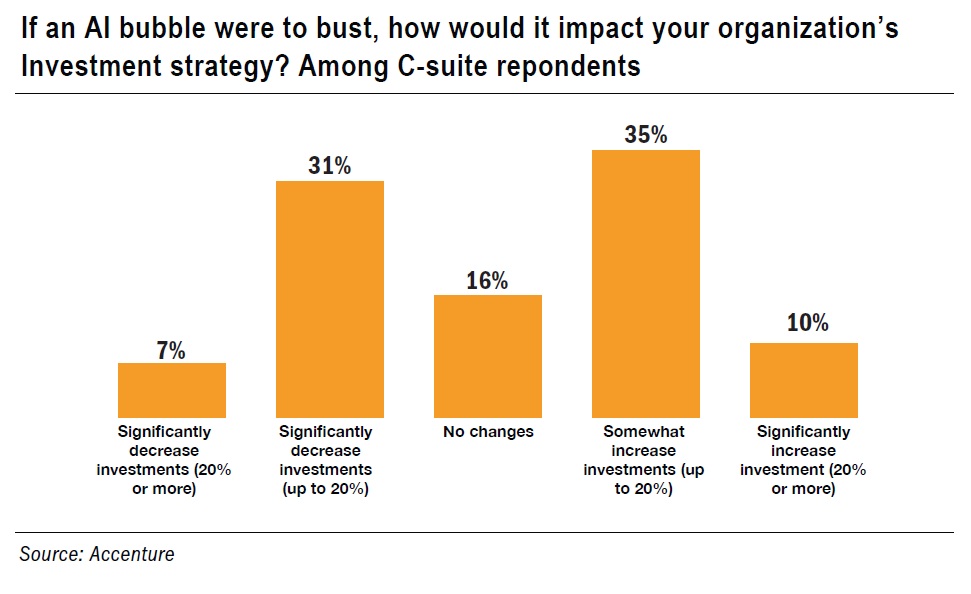

A lack of consistent accuracy in outputs seems to be creating trust issues that could prove a barrier to further adoption. According to a survey performed for enterprise agentic automation provider Camunda, 84 percent of process automation professionals are worried about the business risk of AI in day-to-day processes when IT does not have the appropriate controls in place (which is often), while 80 percent are concerned about a lack of transparency into how AI is used. What’s more, half of respondents believe untamed agentic AI risks “fanning the flames of poorly implemented processes and automations.” All told, Camunda found that almost three-quarters (73 percent) of organizations admit there is a gap between their agentic AI vision and the current reality.

“The promise of agentic AI is undeniable, but trust remains the key barrier to adoption,” said Kurt Petersen, senior vice president, customer success at Camunda. “Right now, exercising caution with agentic AI means many organizations can’t move beyond pilots or isolated use cases. Without clear guardrails and visibility, agents will stay at the edge of the business.”

Camunda’s survey bears this out. Eight in 10 process automation professionals said most of their AI agents are chatbots or assistants that simply summarize or answer questions, instead of handling mission-critical use cases. Neary half (48 percent) said their AI agents operate in silos and are not woven into end-to-end business processes. Possibly even more concerning, 39 percent said they flat out don’t trust delegating critical tasks to AI. Likewise, just 27 percent of executives surveyed by Accenture strongly agree that they are comfortable delegating tasks to them. And just 17 percent said they enjoy using AI and seek new ways to apply it, down from 21 percent in 2025.

Incidentally, Accenture also noted signs of good old-fashioned FUD (fear, uncertainty and doubt). The IT professional services firm found that about half of workers feel secure in their jobs – down 11 percentage points from the 59 percent who said the same as recently as Summer 2025. About six in 10 workers also believe that young professionals are having a harder time finding jobs due to automation and AI. The percentage of workforces that said they “enjoy using AI and seek new ways to apply it” fell from 21 percent last summer to 17 percent this January.

Overall, studies suggest AI implementations have focused primarily on lower-level, lower-risk activities such as answering questions, summarizing information and automating repeated tasks. AI agents currently operate at the edge of business processes rather than within core, mission-critical process flows and are heavily dependent on human oversight and approval for important decisions.

There’s a growing understanding, however, that in order to justify deeper investment in AI, and achieve its massive potential, it must be better applied to strategic decision making and high value tasks and integrated deeper into business processes.

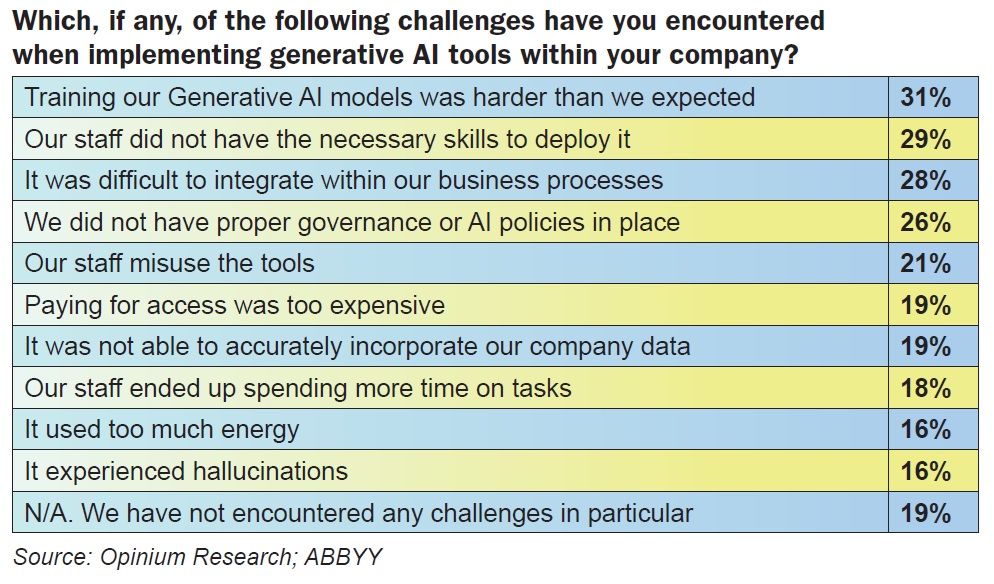

Not that it will be friction free. About a third of IT decision makers surveyed for process optimization company ABBYY reported struggles integrating GenAI into business practices, while training GenAI models was harder than expected. One root cause of such struggles is staff not having the skills

necessary to train models and integrate data within business-critical business processes, Camunda executives argued. And just because business leaders say their staff is being properly trained, don’t automatically believe it.

“While two-thirds of leaders (66 percent) cite skills training as a top investment priority, that investment is not consistently reaching the employees most exposed to rework,” reported the Workday study. “Among employees who use AI the most, only 37 percent report increased access to training – a nearly 30-point gap between stated intent and lived experience.”

The issue is compounded by lagging role redesign.

“(N)early nine in 10 organizations report that fewer than half of their roles have been updated to include AI-related skills,” Workday researchers continued. “AI has been layered onto roles that were never updated to accommodate it – forcing employees to use 2025 tools within 2015 job structures. Rather than reducing effort, this mismatch often increases it, as employees are left to reconcile faster production with unchanged expectations around accuracy, judgment and accountability.”

For employees already doing a large share of rework, “outdated role definitions make it harder to capture AI’s benefits,” the study warned. “Without clear expectations for how AI should be used – and where human judgment must apply – employees default to verification and correction, absorbing the cost of low-quality output themselves.”

At the same time, technology stacks are becoming more distributed and the number of endpoints involved in each process is growing, as a full 76 percent of organizations surveyed by Camunda said the volume and diversity of endpoints is increasing exponentially.

“As a result, 85 percent say they need better tools to manage the intersections between processes, highlighting the challenge organizations face in realizing full value from their AI and automation investments,” said Camunda executives.

Bridging the gap between AI vision and reality, Camunda executives argued, requires a move beyond standalone, siloed agents toward “agentic orchestration,” which enables teams to blend deterministic and dynamic orchestration of business processes, leveraging agents to add dynamic reasoning to deterministic processes so they can adapt in real time. Apparently, professionals responsible for automation within their companies tend to agree, as 88 percent said AI needs to be orchestrated across business processes if organizations are to get maximum benefit from their AI investments, while a full 90 percent said AI needs to be orchestrated like any other endpoint within automated business processes to ensure compliance with regulations.

At the same time, 85 percent also said their organization has not yet reached the right level of process maturity to implement agentic orchestration, suggesting a need to increase process maturity and AI maturity in parallel.

“Deterministic orchestration has always established structured guardrails. By blending it with dynamic orchestration patterns to leverage reasoning across AI agents, people and systems in end-to-end processes, enterprises can build a foundation for AI agents they truly trust,” said Camunda’s Petersen. “This is enterprise agentic automation in practice, and it is how organizations will turn today’s AI experiments into durable, business-critical capabilities.”

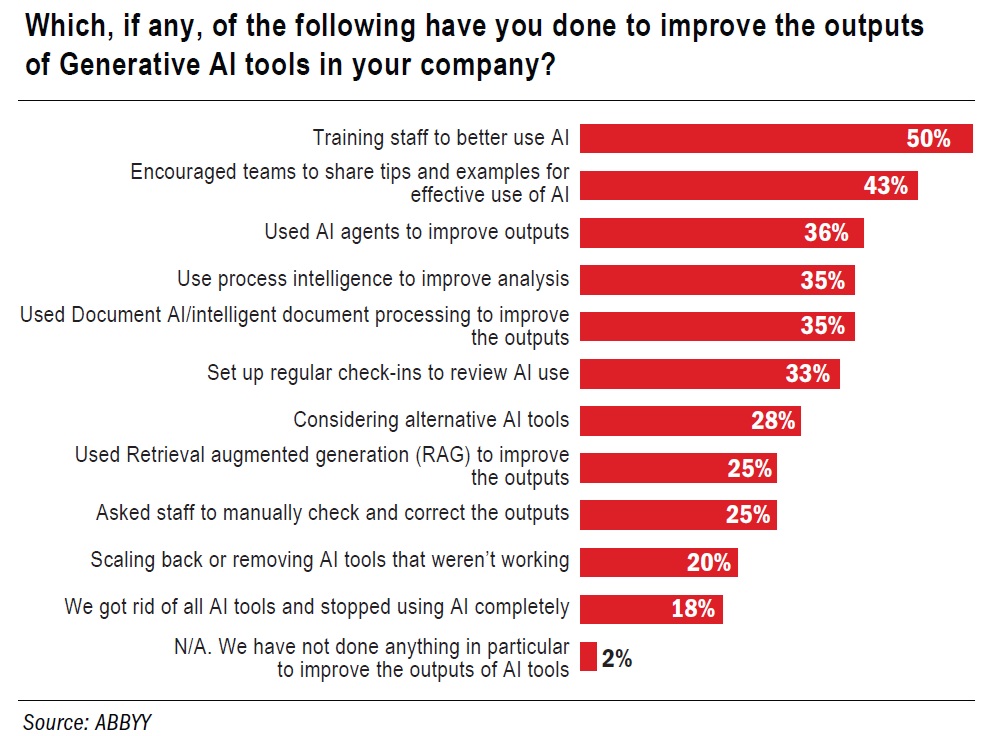

ABBYY, for its part, found that 99 percent of organizations that augmented GenAI deployments with complementary tools, such as process intelligence, Document AI and retrieval augmented generation (RAG), reported improved outcomes, including more consistent results (50 percent), greater accuracy (43 percent), stronger trust (43 percent), and cost savings (42 percent).

The failure to address existing pain points, challenges and the gaps between AI hype and reality threatens more than the rate of growth and further investment in AI technologies. In addition to the ding it could make to a partner’s advisor status, there is the very real chance businesses could pull back on AI-oriented programs altogether. After all, Accenture’s recent study found that regular AI agent usage among employees dropped 10 points since the summer, while a soon-to-be released survey from RingCentral found that nearly 40 percent of organizations have paused or cancelled an AI project, mostly because expectations, workflows or training weren’t aligned from the start.

The ultimate lesson for technology advisors could be to focus less on accelerating usage and more on improving how AI implementations and outcomes are designed, measured and supported.