After being overshadowed by AI, cybersecurity is primed for a spending rebound in 2026

About three years ago, before GenAI grabbed the focus of boardrooms, C-suites and just about every tech investment dollar, much of the conversations around business technology and IT spending centered on cybersecurity. Before 2023, survey after survey showed cybersecurity as the top priority among IT decision makers, and technology advisors were warned that they better be ready to have a security conversation with their customers and prospects.

Now that AI technologies have travorgeled along their respective hype cycles, security is primed to return to a position of top priority among IT buyers, with 2026 expected to bring a healthy rebound in cybersecurity spending. And in a type of full circle moment, the emergence and prevalence of AI technologies are major driving forces of this cybersecurity redux.

As Channelholic analyst Rich Freeman recently pointed out, forecasts such as IDC’s $758 billion in AI infrastructure spending by 2029, and Omdia’s $267 billion global partner opportunity for AI and agentic AI services by 2030, “are unlikely to become reality unless a lot of people devote a lot of time, attention and money to AI security.”

Already, there’s some indications that a security spending rebound is percolating. According to researchers at Pinpoint Search Group, for instance, 2025 ended up being “an incredibly strong year for investment in cybersecurity vendors,” with $13.97 billion raised in the fiscal year, representing a 47 percent increase in total funding compared to 2024. Funding volume in the cybersecurity sector increased by 30 percent with 392 funding rounds tracked in fiscal year 2025 compared to 304 in FY 2024. This trajectory follows sharp contraction in 2022 and 2023, and while still below the 2021 peak of $20.6 billion, “this level of capital places 2025 nearly on par with 2022 for investment in the cybersecurity industry and firmly establishes it as the strongest funding year of the post-correction cycle, reflecting renewed confidence in the industry,” said the Pinpoint report.

Likewise, Forrester analysts expect demand for cybersecurity products and services to remain “a tech-spending bright spot, projected to grow faster than overall global commercial software.” Forrester predicted that 2025 will show a rise of 13.1 percent in cybersecurity spending year over year, hitting $174.8 billion. That will be followed by 14.5 percent growth in 2026, with cybersecurity market spending topping $200 billion for the first time.

Autonomous Attack

In some ways, the resurgence in security spending stems from a new sense of urgency, as IT leaders come to realize that bad actors have access to the same AI superpowers that are driving effectiveness and efficiencies in their organizations. Indeed, many believe 2026 will see the first time that attackers achieve a fully automated security compromise, from start to finish, such as agentic AI executing the entire kill chain.

“Whether it’s an autonomous AI system compromising enterprise infrastructure or AI agents leaking highly sensitive data at scale, this incident will force organizations to confront a new threat paradigm,” said Dashlane chief technology officer, Frédéric Rivain, in a company blog post. “The breach will exploit the trust we’ve placed in AI systems themselves or demonstrate AI’s ability to bypass defenses that stop human attackers.”

Feeling confident that it’s your human resources director on the other end of a Zoom call asking for your password, personal information or confidential material? AI-armed attackers – with less and less technical sophistication – will utilize generative AI and deepfakes to launch incredibly convincing phishing campaigns as early as this year, experts predict, forcing organizations to rethink employee training and lock down every digital interaction.

“We’re witnessing an evolution of cyberattack methods, a wholesale transformation in how adversaries operate,” said Haider Pasha, vice president and chief security officer, EMEA, for Palo Alto Networks, in the company’s recently released, ”State of Cloud Security” report.

The Palo Alto Networks Unit 42 research team documented a surge in daily cyberattacks from 2.3 million to up to nearly 9 million in the span of a year – an almost threefold increase driven by attackers’ adoption of AI tools, said the report.

And the volume of attacks tells only part of the story. Concern also lies in velocity, said Unit 42 researchers. “Attackers have redefined their key performance indicators, dramatically reducing what we call ‘mean time to compromise’ and ‘mean time to exfiltrate,’” the reported noted.

Unit 42 testing has demonstrated how breaches that took an average of 44 days in 2021, with AI assistance, can now occur in as little as 25 minutes. “The speed, scale and sophistication we’ve observed over the past couple of years is incredible,” warned the researchers.

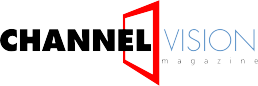

The cost per security incident also is going up, according to data from cybersecurity provider Netwrix. In 2025, 75 percent of surveyed organizations reported financial damage due to attacks – up from 60 percent in 2024, and the number of organizations estimating their damage at $200,000 or more nearly doubled, rising from 7 percent to 13 percent.

“Research strongly suggests that attackers are ahead in AI adoption, which is pushing defenders into a reactive posture,” said Jeff Warren, chief product officer at Netwrix.

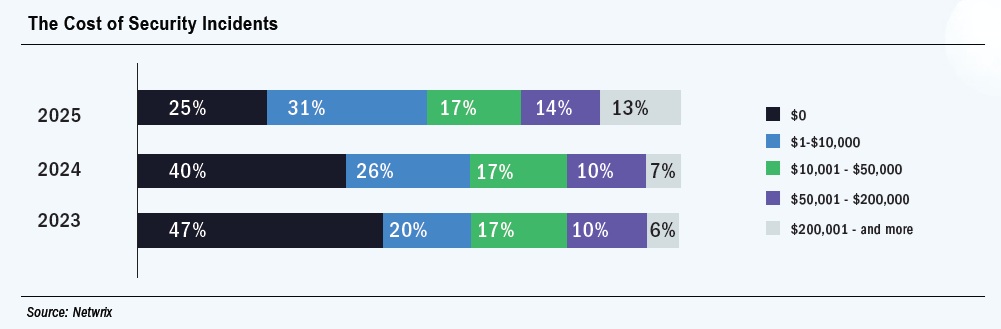

More than two-thirds (37 percent) of IT professionals surveyed by Netwrix said AI-driven threats already have forced them to adjust, a direct reaction to the offensive use of AI by adversaries, said Warren.

“It’s fair to say that attackers are moving faster with AI, and defenders are scrambling to catch up,” he continued. “This asymmetry is not new in cybersecurity, but AI appears to be accelerating it.”

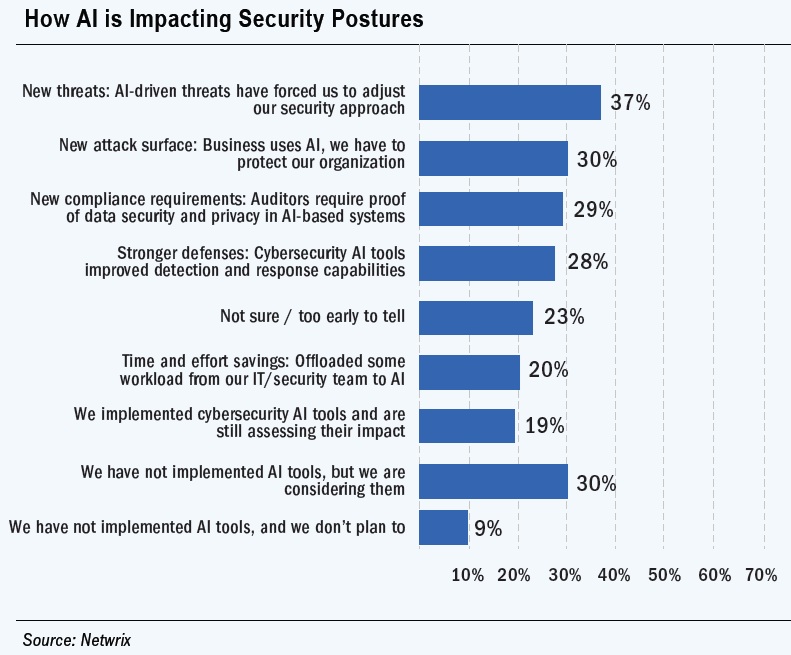

In fact, every organization surveyed by Palo Alto’s Unit 42 reported experiencing every one of the 10 measured security incidents in the past year. “Their response confirms that exposure is more a matter of operating in today’s environment than it is missteps,” said the researchers.

The most consequential trend, said Unit 24 researchers, is at the intersection of GenAI and API risk. API attacks showed the sharpest year-over-year increase at 41 percent, fueled in part by two compounding forces, said Unit 42 researchers. First, as suggested above, generative AI has lowered the barrier to exploitation by enabling low-skilled actors to generate high-fidelity attacks. Secondly, the proliferation of AI agents within corporate infrastructure, including those rapidly deployed and lightly governed, has introduced prompt injection vectors and an explosion of API surfaces, they continued.

AI Sprawling

In other words, AI systems not only expand the potential attack surface, they also can weaken it in some ways. Consider, for example, the enthusiasm around deploying agentic AI to handle tasks from customer service to code generation. The rush to keep pace has led to many cases where proper security and access control were sacrificed in the name of speed. The risks are compounded by the fact that AI systems tend to be high-value assets, with broad access to proprietary data or business-critical functions; can operate across multiple systems simultaneously; and make decisions without human oversight. As Dashlane’s Rivian pointed out, this makes AI agents “both valuable and vulnerable – a losing combo.”

In turn, many security experts predict that in 2026, autonomous AI agents will become the new favorite target of threat actors, leading to a high-profile data breach.

“The security industry has spent decades securing human identities. Now, we face a more complex challenge: Securing machines that act like humans but at machine speed and scale,” said Rivian.

Defenders will need to protect AI models, training data, prompts and outputs, much as they protect proprietary code, warned Netwrix’s Schrader. “It is important to secure the entire AI lifecycle, from data ingestion to model training to monitoring API endpoints for any signs of prompt injection, abuse or model leakage.”

That includes the thousands of MCP (model context protocol) servers that allow AI models to securely connect to external data sources and tools. Enabling many enterprise AI use cases, these MCP servers are often underused and lightly monitored.

“Adversaries target the tools and LLM systems, the underlying infrastructure supporting model development, the actions these systems take, and critically, their memory stores,” said Palo Alto’s Pasha. “Each represents a potential point of compromise. Our defensive posture must outpace this reality.”

Ultimately, substantial investments in AI-enabled and automated cybersecurity tools will be required to defend resources at the speed of machines. In the meantime, the foundations of hardened identities, segmented access, zero trust architecture and monitoring and governance controls are more crucial than ever.